M007 — EXPERIMENTATION: THE CLOSEST WE GET TO TRUTH

Decision confidence does not come from better models. It comes from better alignment.

Measurement explains. Experiments decide.

That distinction matters more than most organisations are willing to admit.

Most measurement systems are very good at telling us what happened. Far fewer are good at telling us whether we should act. The gap between explanation and decision is where confidence breaks down, politics creeps in, and gut feel quietly retakes control.

Experimentation is not the pursuit of truth. It is the closest we get to decision-grade confidence.

Why Most Measurement Fails at the Moment of Decision

The problem is rarely a lack of data.

It is a lack of clarity about what kind of certainty a decision actually requires.

Three common mistakes show up again and again:

Correlation is mistaken for causation

Precision is mistaken for confidence

Speed is mistaken for truth

Attribution models explain patterns but struggle to isolate cause. MMM explains long-term dynamics but rarely answers campaign-level questions with enough confidence to act quickly. Platform reporting moves fast but is structurally biased toward crediting itself.

None of these are broken. They are simply being asked to do jobs they were never designed to do.

The failure is not analytical. It is epistemic.

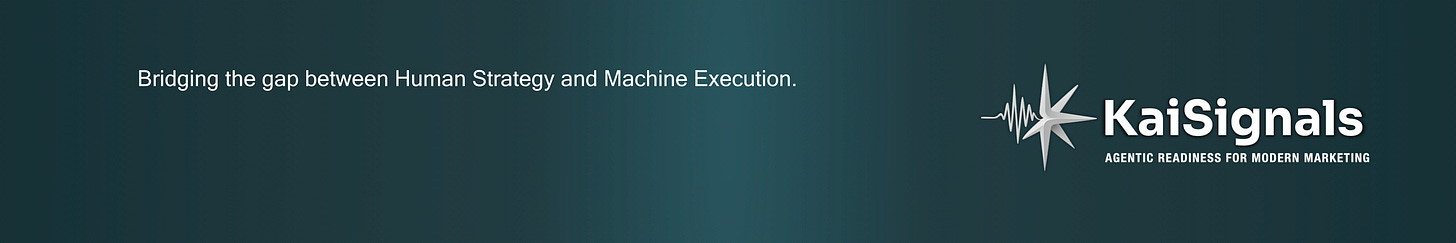

Horses for Courses: Matching the Question to the Method

Experimentation works best when it is treated as a progression, not a menu.

Different questions demand different levels of confidence. As the stakes rise, so should the rigour of the method. Precision increases as constraints increase. Maturity is knowing when enough is enough.

A simplified confidence ladder looks like this.

Creative A/B Testing

Directional by nature

Fast to run

Biased by design

This is the right tool when the question is simple: which way is better?

It is not designed to answer questions about incrementality or business impact. Used correctly, it accelerates learning. Used incorrectly, it creates false certainty.

Conversion Lift (Exposed vs Unexposed)

Stronger causal signal

Ignores many externalities

Can run alongside attribution

This is appropriate when the question is whether advertising caused a measurable change in behaviour. It improves confidence without demanding heavy operational change.

It is not comprehensive, but it is a meaningful step up from correlation.

Causal Impact and Difference-in-Difference

Accounts for wider external factors

Strong post-factor analytical power

Wider confidence intervals, but materially more robust causal inference

These methods ask a harder question: what changed, controlling for noise?

They trade precision for robustness. When designed well, they surface effects that simpler methods miss. In practice, this is often where organisations first encounter uncomfortable results.

In one case from a previous life, this approach showed a roughly 12 percent improvement once external factors were properly accounted for. Not dramatic. But real.

Match Market and Geo Experiments

Highest causal confidence

Operationally expensive

Power constrained

This is the closest we get to causal truth in real-world marketing.

It is the right choice when decisions are large, irreversible, or strategic. It is also the most commonly misused. Poor power, leaky geographies, and overlapping tests can quickly erode confidence if governance is weak.

Even with perfect design, these experiments are not flawless. They are simply better.

Conversion Lift Studies with Difference-in-Difference (CLS DoD)

Used when Geo or Match Market designs are not feasible

Combines exposed vs unexposed conversion lift with Difference-in-Difference controls

Explicitly focused on allocation and marginal return, not raw lift

CLS DoD designs extend standard conversion lift by layering a Difference-in-Difference framework on top. The lift study establishes directional incrementality between exposed and unexposed audiences. The DoD structure then controls for broader market movement, seasonality, and external shocks.

These designs are most valuable when the constraint is not intent, but power. When geographies are too small, markets too entangled, or parallel tests unavoidable, CLS DoD provides a disciplined alternative to full Geo or Match Market experiments.

They trade experimental purity for practical scalability, but retain causal structure. The objective is not to prove that advertising works. It is to understand where incremental budget delivers the highest marginal return, and to inform growth curve allocation decisions.

In practice, this is where experimentation starts to shape investment strategy rather than justify past decisions.

Experimentation Is a System, Not a Test

Most organisations do not lack tests. They lack an experimentation system.

High-performing teams treat experimentation as an always-on loop:

Hypotheses are tied to commercial outcomes, not metrics

Tests are prioritised by impact, confidence, and feasibility

Designs are fair and interpretable

Learnings are repeated, not celebrated once

Results are socialised as learning, not success or failure

Only proven ideas earn scale

A simple rule holds surprisingly well: if a test cannot change a decision, it is not a test. It is theatre.

Why Most Testing Cultures Collapse

The failure modes are rarely technical.

They are organisational.

Common patterns include:

Single owners or isolated specialists

Political prioritisation of what gets tested

Learnings that are not banked or reused

Reversion to gut feel under pressure

Culture is often misunderstood here. Culture is not saying that experimentation is valued. Culture is deciding what happens when a result contradicts a senior opinion.

Without clear governance, experimentation becomes episodic. With it, learning compounds.

How Methods Actually Work Together

Experiments do not replace other measurement approaches. They anchor them.

Used well:

Experiments calibrate bias in attribution

They inform and validate MMM

They create guardrails for platform optimisation

This is where the combination matters.

In one example, a Difference-in-Difference analysis showed over 100 percent impact. Not because performance doubled, but because budget was reallocated away from low-return activity toward higher-return opportunities. The learning was not about lift. It was about allocation.

That distinction is the difference between reporting performance and shaping strategy.

The Real Gold Standard Is the Operating Model

Experiments matter because they force clarity.

They make assumptions explicit. They surface trade-offs. They reduce the cost of being wrong.

But the real advantage does not come from any single method. It comes from an operating model where:

The right question is asked first

The method is chosen deliberately

Learning compounds over time

Experimentation is not how teams learn faster. It is how they make fewer expensive mistakes with more confidence.

Most organisations do not need more tests. They need a test system.

If you are seeing similar challenges in your organisation, this is exactly the problem we work on at KaiSignals: helping teams move from analysis to clearer decisions.